Merge origin/dev into pr-1774-review and resolve watcher conflicts

This commit is contained in:

@@ -31,6 +31,7 @@ bin/*

|

||||

.agent/*

|

||||

.agents/*

|

||||

.opencode/*

|

||||

.idea/*

|

||||

.bmad/*

|

||||

_bmad/*

|

||||

_bmad-output/*

|

||||

|

||||

@@ -41,6 +41,7 @@ GEMINI.md

|

||||

.agents/*

|

||||

.agents/*

|

||||

.opencode/*

|

||||

.idea/*

|

||||

.bmad/*

|

||||

_bmad/*

|

||||

_bmad-output/*

|

||||

|

||||

@@ -10,11 +10,11 @@ So you can use local or multi-account CLI access with OpenAI(include Responses)/

|

||||

|

||||

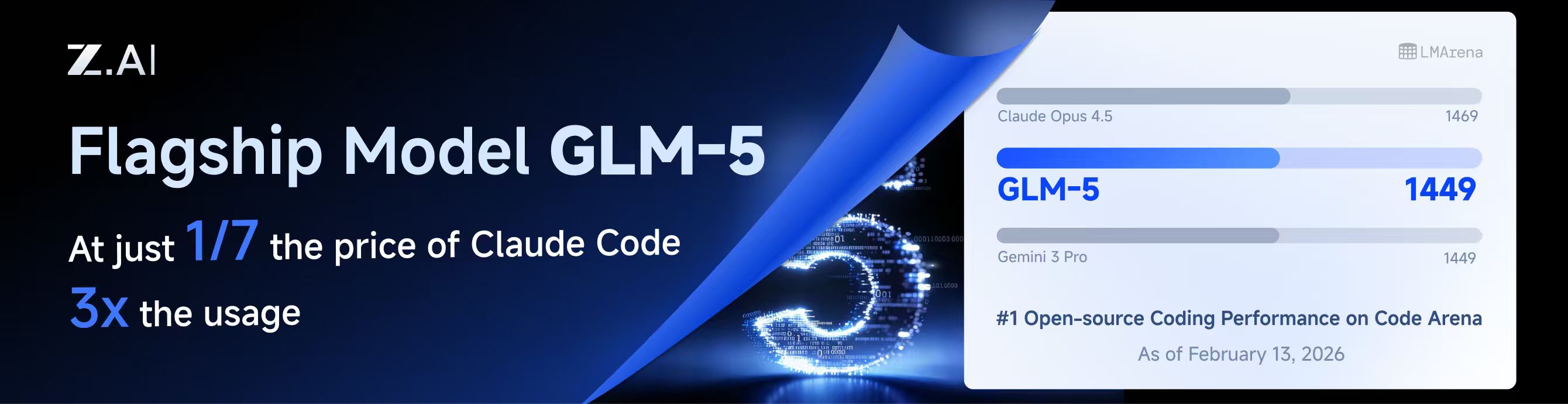

## Sponsor

|

||||

|

||||

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

|

||||

This project is sponsored by Z.ai, supporting us with their GLM CODING PLAN.

|

||||

|

||||

GLM CODING PLAN is a subscription service designed for AI coding, starting at just $3/month. It provides access to their flagship GLM-4.7 model across 10+ popular AI coding tools (Claude Code, Cline, Roo Code, etc.), offering developers top-tier, fast, and stable coding experiences.

|

||||

GLM CODING PLAN is a subscription service designed for AI coding, starting at just $10/month. It provides access to their flagship GLM-4.7 & (GLM-5 Only Available for Pro Users)model across 10+ popular AI coding tools (Claude Code, Cline, Roo Code, etc.), offering developers top-tier, fast, and stable coding experiences.

|

||||

|

||||

Get 10% OFF GLM CODING PLAN:https://z.ai/subscribe?ic=8JVLJQFSKB

|

||||

|

||||

|

||||

+3

-3

@@ -10,13 +10,13 @@

|

||||

|

||||

## 赞助商

|

||||

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

|

||||

本项目由 Z智谱 提供赞助, 他们通过 GLM CODING PLAN 对本项目提供技术支持。

|

||||

|

||||

GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元,即可在十余款主流AI编码工具如 Claude Code、Cline、Roo Code 中畅享智谱旗舰模型GLM-4.7,为开发者提供顶尖的编码体验。

|

||||

GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元,即可在十余款主流AI编码工具如 Claude Code、Cline、Roo Code 中畅享智谱旗舰模型GLM-4.7(受限于算力,目前仅限Pro用户开放),为开发者提供顶尖的编码体验。

|

||||

|

||||

智谱AI为本软件提供了特别优惠,使用以下链接购买可以享受九折优惠:https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII

|

||||

智谱AI为本产品提供了特别优惠,使用以下链接购买可以享受九折优惠:https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII

|

||||

|

||||

---

|

||||

|

||||

|

||||

@@ -201,6 +201,9 @@ nonstream-keepalive-interval: 0

|

||||

# alias: "vertex-flash" # client-visible alias

|

||||

# - name: "gemini-2.5-pro"

|

||||

# alias: "vertex-pro"

|

||||

# excluded-models: # optional: models to exclude from listing

|

||||

# - "imagen-3.0-generate-002"

|

||||

# - "imagen-*"

|

||||

|

||||

# Amp Integration

|

||||

# ampcode:

|

||||

|

||||

@@ -13,6 +13,7 @@ import (

|

||||

"net/http"

|

||||

"os"

|

||||

"path/filepath"

|

||||

"runtime"

|

||||

"sort"

|

||||

"strconv"

|

||||

"strings"

|

||||

@@ -42,14 +43,11 @@ import (

|

||||

var lastRefreshKeys = []string{"last_refresh", "lastRefresh", "last_refreshed_at", "lastRefreshedAt"}

|

||||

|

||||

const (

|

||||

anthropicCallbackPort = 54545

|

||||

geminiCallbackPort = 8085

|

||||

codexCallbackPort = 1455

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

geminiCLIUserAgent = "google-api-nodejs-client/9.15.1"

|

||||

geminiCLIApiClient = "gl-node/22.17.0"

|

||||

geminiCLIClientMetadata = "ideType=IDE_UNSPECIFIED,platform=PLATFORM_UNSPECIFIED,pluginType=GEMINI"

|

||||

anthropicCallbackPort = 54545

|

||||

geminiCallbackPort = 8085

|

||||

codexCallbackPort = 1455

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

)

|

||||

|

||||

type callbackForwarder struct {

|

||||

@@ -189,17 +187,6 @@ func startCallbackForwarder(port int, provider, targetBase string) (*callbackFor

|

||||

return forwarder, nil

|

||||

}

|

||||

|

||||

func stopCallbackForwarder(port int) {

|

||||

callbackForwardersMu.Lock()

|

||||

forwarder := callbackForwarders[port]

|

||||

if forwarder != nil {

|

||||

delete(callbackForwarders, port)

|

||||

}

|

||||

callbackForwardersMu.Unlock()

|

||||

|

||||

stopForwarderInstance(port, forwarder)

|

||||

}

|

||||

|

||||

func stopCallbackForwarderInstance(port int, forwarder *callbackForwarder) {

|

||||

if forwarder == nil {

|

||||

return

|

||||

@@ -641,44 +628,85 @@ func (h *Handler) DeleteAuthFile(c *gin.Context) {

|

||||

c.JSON(400, gin.H{"error": "invalid name"})

|

||||

return

|

||||

}

|

||||

full := filepath.Join(h.cfg.AuthDir, filepath.Base(name))

|

||||

if !filepath.IsAbs(full) {

|

||||

if abs, errAbs := filepath.Abs(full); errAbs == nil {

|

||||

full = abs

|

||||

|

||||

targetPath := filepath.Join(h.cfg.AuthDir, filepath.Base(name))

|

||||

targetID := ""

|

||||

if targetAuth := h.findAuthForDelete(name); targetAuth != nil {

|

||||

targetID = strings.TrimSpace(targetAuth.ID)

|

||||

if path := strings.TrimSpace(authAttribute(targetAuth, "path")); path != "" {

|

||||

targetPath = path

|

||||

}

|

||||

}

|

||||

if err := os.Remove(full); err != nil {

|

||||

if os.IsNotExist(err) {

|

||||

if !filepath.IsAbs(targetPath) {

|

||||

if abs, errAbs := filepath.Abs(targetPath); errAbs == nil {

|

||||

targetPath = abs

|

||||

}

|

||||

}

|

||||

if errRemove := os.Remove(targetPath); errRemove != nil {

|

||||

if os.IsNotExist(errRemove) {

|

||||

c.JSON(404, gin.H{"error": "file not found"})

|

||||

} else {

|

||||

c.JSON(500, gin.H{"error": fmt.Sprintf("failed to remove file: %v", err)})

|

||||

c.JSON(500, gin.H{"error": fmt.Sprintf("failed to remove file: %v", errRemove)})

|

||||

}

|

||||

return

|

||||

}

|

||||

if err := h.deleteTokenRecord(ctx, full); err != nil {

|

||||

c.JSON(500, gin.H{"error": err.Error()})

|

||||

if errDeleteRecord := h.deleteTokenRecord(ctx, targetPath); errDeleteRecord != nil {

|

||||

c.JSON(500, gin.H{"error": errDeleteRecord.Error()})

|

||||

return

|

||||

}

|

||||

h.disableAuth(ctx, full)

|

||||

if targetID != "" {

|

||||

h.disableAuth(ctx, targetID)

|

||||

} else {

|

||||

h.disableAuth(ctx, targetPath)

|

||||

}

|

||||

c.JSON(200, gin.H{"status": "ok"})

|

||||

}

|

||||

|

||||

func (h *Handler) findAuthForDelete(name string) *coreauth.Auth {

|

||||

if h == nil || h.authManager == nil {

|

||||

return nil

|

||||

}

|

||||

name = strings.TrimSpace(name)

|

||||

if name == "" {

|

||||

return nil

|

||||

}

|

||||

if auth, ok := h.authManager.GetByID(name); ok {

|

||||

return auth

|

||||

}

|

||||

auths := h.authManager.List()

|

||||

for _, auth := range auths {

|

||||

if auth == nil {

|

||||

continue

|

||||

}

|

||||

if strings.TrimSpace(auth.FileName) == name {

|

||||

return auth

|

||||

}

|

||||

if filepath.Base(strings.TrimSpace(authAttribute(auth, "path"))) == name {

|

||||

return auth

|

||||

}

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (h *Handler) authIDForPath(path string) string {

|

||||

path = strings.TrimSpace(path)

|

||||

if path == "" {

|

||||

return ""

|

||||

}

|

||||

if h == nil || h.cfg == nil {

|

||||

return path

|

||||

id := path

|

||||

if h != nil && h.cfg != nil {

|

||||

authDir := strings.TrimSpace(h.cfg.AuthDir)

|

||||

if authDir != "" {

|

||||

if rel, errRel := filepath.Rel(authDir, path); errRel == nil && rel != "" {

|

||||

id = rel

|

||||

}

|

||||

}

|

||||

}

|

||||

authDir := strings.TrimSpace(h.cfg.AuthDir)

|

||||

if authDir == "" {

|

||||

return path

|

||||

// On Windows, normalize ID casing to avoid duplicate auth entries caused by case-insensitive paths.

|

||||

if runtime.GOOS == "windows" {

|

||||

id = strings.ToLower(id)

|

||||

}

|

||||

if rel, err := filepath.Rel(authDir, path); err == nil && rel != "" {

|

||||

return rel

|

||||

}

|

||||

return path

|

||||

return id

|

||||

}

|

||||

|

||||

func (h *Handler) registerAuthFromFile(ctx context.Context, path string, data []byte) error {

|

||||

@@ -896,10 +924,19 @@ func (h *Handler) disableAuth(ctx context.Context, id string) {

|

||||

if h == nil || h.authManager == nil {

|

||||

return

|

||||

}

|

||||

authID := h.authIDForPath(id)

|

||||

if authID == "" {

|

||||

authID = strings.TrimSpace(id)

|

||||

id = strings.TrimSpace(id)

|

||||

if id == "" {

|

||||

return

|

||||

}

|

||||

if auth, ok := h.authManager.GetByID(id); ok {

|

||||

auth.Disabled = true

|

||||

auth.Status = coreauth.StatusDisabled

|

||||

auth.StatusMessage = "removed via management API"

|

||||

auth.UpdatedAt = time.Now()

|

||||

_, _ = h.authManager.Update(ctx, auth)

|

||||

return

|

||||

}

|

||||

authID := h.authIDForPath(id)

|

||||

if authID == "" {

|

||||

return

|

||||

}

|

||||

@@ -2285,9 +2322,7 @@ func callGeminiCLI(ctx context.Context, httpClient *http.Client, endpoint string

|

||||

return fmt.Errorf("create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("X-Goog-Api-Client", geminiCLIApiClient)

|

||||

req.Header.Set("Client-Metadata", geminiCLIClientMetadata)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

@@ -2357,7 +2392,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

@@ -2378,7 +2413,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo = httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

|

||||

@@ -0,0 +1,129 @@

|

||||

package management

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"net/http"

|

||||

"net/http/httptest"

|

||||

"net/url"

|

||||

"os"

|

||||

"path/filepath"

|

||||

"testing"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||

)

|

||||

|

||||

func TestDeleteAuthFile_UsesAuthPathFromManager(t *testing.T) {

|

||||

t.Setenv("MANAGEMENT_PASSWORD", "")

|

||||

gin.SetMode(gin.TestMode)

|

||||

|

||||

tempDir := t.TempDir()

|

||||

authDir := filepath.Join(tempDir, "auth")

|

||||

externalDir := filepath.Join(tempDir, "external")

|

||||

if errMkdirAuth := os.MkdirAll(authDir, 0o700); errMkdirAuth != nil {

|

||||

t.Fatalf("failed to create auth dir: %v", errMkdirAuth)

|

||||

}

|

||||

if errMkdirExternal := os.MkdirAll(externalDir, 0o700); errMkdirExternal != nil {

|

||||

t.Fatalf("failed to create external dir: %v", errMkdirExternal)

|

||||

}

|

||||

|

||||

fileName := "codex-user@example.com-plus.json"

|

||||

shadowPath := filepath.Join(authDir, fileName)

|

||||

realPath := filepath.Join(externalDir, fileName)

|

||||

if errWriteShadow := os.WriteFile(shadowPath, []byte(`{"type":"codex","email":"shadow@example.com"}`), 0o600); errWriteShadow != nil {

|

||||

t.Fatalf("failed to write shadow file: %v", errWriteShadow)

|

||||

}

|

||||

if errWriteReal := os.WriteFile(realPath, []byte(`{"type":"codex","email":"real@example.com"}`), 0o600); errWriteReal != nil {

|

||||

t.Fatalf("failed to write real file: %v", errWriteReal)

|

||||

}

|

||||

|

||||

manager := coreauth.NewManager(nil, nil, nil)

|

||||

record := &coreauth.Auth{

|

||||

ID: "legacy/" + fileName,

|

||||

FileName: fileName,

|

||||

Provider: "codex",

|

||||

Status: coreauth.StatusError,

|

||||

Unavailable: true,

|

||||

Attributes: map[string]string{

|

||||

"path": realPath,

|

||||

},

|

||||

Metadata: map[string]any{

|

||||

"type": "codex",

|

||||

"email": "real@example.com",

|

||||

},

|

||||

}

|

||||

if _, errRegister := manager.Register(context.Background(), record); errRegister != nil {

|

||||

t.Fatalf("failed to register auth record: %v", errRegister)

|

||||

}

|

||||

|

||||

h := NewHandlerWithoutConfigFilePath(&config.Config{AuthDir: authDir}, manager)

|

||||

h.tokenStore = &memoryAuthStore{}

|

||||

|

||||

deleteRec := httptest.NewRecorder()

|

||||

deleteCtx, _ := gin.CreateTestContext(deleteRec)

|

||||

deleteReq := httptest.NewRequest(http.MethodDelete, "/v0/management/auth-files?name="+url.QueryEscape(fileName), nil)

|

||||

deleteCtx.Request = deleteReq

|

||||

h.DeleteAuthFile(deleteCtx)

|

||||

|

||||

if deleteRec.Code != http.StatusOK {

|

||||

t.Fatalf("expected delete status %d, got %d with body %s", http.StatusOK, deleteRec.Code, deleteRec.Body.String())

|

||||

}

|

||||

if _, errStatReal := os.Stat(realPath); !os.IsNotExist(errStatReal) {

|

||||

t.Fatalf("expected managed auth file to be removed, stat err: %v", errStatReal)

|

||||

}

|

||||

if _, errStatShadow := os.Stat(shadowPath); errStatShadow != nil {

|

||||

t.Fatalf("expected shadow auth file to remain, stat err: %v", errStatShadow)

|

||||

}

|

||||

|

||||

listRec := httptest.NewRecorder()

|

||||

listCtx, _ := gin.CreateTestContext(listRec)

|

||||

listReq := httptest.NewRequest(http.MethodGet, "/v0/management/auth-files", nil)

|

||||

listCtx.Request = listReq

|

||||

h.ListAuthFiles(listCtx)

|

||||

|

||||

if listRec.Code != http.StatusOK {

|

||||

t.Fatalf("expected list status %d, got %d with body %s", http.StatusOK, listRec.Code, listRec.Body.String())

|

||||

}

|

||||

var listPayload map[string]any

|

||||

if errUnmarshal := json.Unmarshal(listRec.Body.Bytes(), &listPayload); errUnmarshal != nil {

|

||||

t.Fatalf("failed to decode list payload: %v", errUnmarshal)

|

||||

}

|

||||

filesRaw, ok := listPayload["files"].([]any)

|

||||

if !ok {

|

||||

t.Fatalf("expected files array, payload: %#v", listPayload)

|

||||

}

|

||||

if len(filesRaw) != 0 {

|

||||

t.Fatalf("expected removed auth to be hidden from list, got %d entries", len(filesRaw))

|

||||

}

|

||||

}

|

||||

|

||||

func TestDeleteAuthFile_FallbackToAuthDirPath(t *testing.T) {

|

||||

t.Setenv("MANAGEMENT_PASSWORD", "")

|

||||

gin.SetMode(gin.TestMode)

|

||||

|

||||

authDir := t.TempDir()

|

||||

fileName := "fallback-user.json"

|

||||

filePath := filepath.Join(authDir, fileName)

|

||||

if errWrite := os.WriteFile(filePath, []byte(`{"type":"codex"}`), 0o600); errWrite != nil {

|

||||

t.Fatalf("failed to write auth file: %v", errWrite)

|

||||

}

|

||||

|

||||

manager := coreauth.NewManager(nil, nil, nil)

|

||||

h := NewHandlerWithoutConfigFilePath(&config.Config{AuthDir: authDir}, manager)

|

||||

h.tokenStore = &memoryAuthStore{}

|

||||

|

||||

deleteRec := httptest.NewRecorder()

|

||||

deleteCtx, _ := gin.CreateTestContext(deleteRec)

|

||||

deleteReq := httptest.NewRequest(http.MethodDelete, "/v0/management/auth-files?name="+url.QueryEscape(fileName), nil)

|

||||

deleteCtx.Request = deleteReq

|

||||

h.DeleteAuthFile(deleteCtx)

|

||||

|

||||

if deleteRec.Code != http.StatusOK {

|

||||

t.Fatalf("expected delete status %d, got %d with body %s", http.StatusOK, deleteRec.Code, deleteRec.Body.String())

|

||||

}

|

||||

if _, errStat := os.Stat(filePath); !os.IsNotExist(errStat) {

|

||||

t.Fatalf("expected auth file to be removed from auth dir, stat err: %v", errStat)

|

||||

}

|

||||

}

|

||||

@@ -516,12 +516,13 @@ func (h *Handler) PutVertexCompatKeys(c *gin.Context) {

|

||||

}

|

||||

func (h *Handler) PatchVertexCompatKey(c *gin.Context) {

|

||||

type vertexCompatPatch struct {

|

||||

APIKey *string `json:"api-key"`

|

||||

Prefix *string `json:"prefix"`

|

||||

BaseURL *string `json:"base-url"`

|

||||

ProxyURL *string `json:"proxy-url"`

|

||||

Headers *map[string]string `json:"headers"`

|

||||

Models *[]config.VertexCompatModel `json:"models"`

|

||||

APIKey *string `json:"api-key"`

|

||||

Prefix *string `json:"prefix"`

|

||||

BaseURL *string `json:"base-url"`

|

||||

ProxyURL *string `json:"proxy-url"`

|

||||

Headers *map[string]string `json:"headers"`

|

||||

Models *[]config.VertexCompatModel `json:"models"`

|

||||

ExcludedModels *[]string `json:"excluded-models"`

|

||||

}

|

||||

var body struct {

|

||||

Index *int `json:"index"`

|

||||

@@ -585,6 +586,9 @@ func (h *Handler) PatchVertexCompatKey(c *gin.Context) {

|

||||

if body.Value.Models != nil {

|

||||

entry.Models = append([]config.VertexCompatModel(nil), (*body.Value.Models)...)

|

||||

}

|

||||

if body.Value.ExcludedModels != nil {

|

||||

entry.ExcludedModels = config.NormalizeExcludedModels(*body.Value.ExcludedModels)

|

||||

}

|

||||

normalizeVertexCompatKey(&entry)

|

||||

h.cfg.VertexCompatAPIKey[targetIndex] = entry

|

||||

h.cfg.SanitizeVertexCompatKeys()

|

||||

@@ -1025,6 +1029,7 @@ func normalizeVertexCompatKey(entry *config.VertexCompatKey) {

|

||||

entry.BaseURL = strings.TrimSpace(entry.BaseURL)

|

||||

entry.ProxyURL = strings.TrimSpace(entry.ProxyURL)

|

||||

entry.Headers = config.NormalizeHeaders(entry.Headers)

|

||||

entry.ExcludedModels = config.NormalizeExcludedModels(entry.ExcludedModels)

|

||||

if len(entry.Models) == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

@@ -14,6 +14,7 @@ import (

|

||||

"strings"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

||||

log "github.com/sirupsen/logrus"

|

||||

)

|

||||

|

||||

@@ -76,6 +77,9 @@ func createReverseProxy(upstreamURL string, secretSource SecretSource) (*httputi

|

||||

req.Header.Del("X-Api-Key")

|

||||

req.Header.Del("X-Goog-Api-Key")

|

||||

|

||||

// Remove proxy, client identity, and browser fingerprint headers

|

||||

misc.ScrubProxyAndFingerprintHeaders(req)

|

||||

|

||||

// Remove query-based credentials if they match the authenticated client API key.

|

||||

// This prevents leaking client auth material to the Amp upstream while avoiding

|

||||

// breaking unrelated upstream query parameters.

|

||||

|

||||

+6

-10

@@ -20,6 +20,7 @@ import (

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/auth/gemini"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/interfaces"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

||||

sdkAuth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

|

||||

cliproxyauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||

log "github.com/sirupsen/logrus"

|

||||

@@ -27,11 +28,8 @@ import (

|

||||

)

|

||||

|

||||

const (

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

geminiCLIUserAgent = "google-api-nodejs-client/9.15.1"

|

||||

geminiCLIApiClient = "gl-node/22.17.0"

|

||||

geminiCLIClientMetadata = "ideType=IDE_UNSPECIFIED,platform=PLATFORM_UNSPECIFIED,pluginType=GEMINI"

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

)

|

||||

|

||||

type projectSelectionRequiredError struct{}

|

||||

@@ -409,9 +407,7 @@ func callGeminiCLI(ctx context.Context, httpClient *http.Client, endpoint string

|

||||

return fmt.Errorf("create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("X-Goog-Api-Client", geminiCLIApiClient)

|

||||

req.Header.Set("Client-Metadata", geminiCLIClientMetadata)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

@@ -630,7 +626,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

@@ -651,7 +647,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo = httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

|

||||

@@ -516,16 +516,6 @@ func LoadConfig(configFile string) (*Config, error) {

|

||||

// If optional is true and the file is missing, it returns an empty Config.

|

||||

// If optional is true and the file is empty or invalid, it returns an empty Config.

|

||||

func LoadConfigOptional(configFile string, optional bool) (*Config, error) {

|

||||

// NOTE: Startup oauth-model-alias migration is intentionally disabled.

|

||||

// Reason: avoid mutating config.yaml during server startup.

|

||||

// Re-enable the block below if automatic startup migration is needed again.

|

||||

// if migrated, err := MigrateOAuthModelAlias(configFile); err != nil {

|

||||

// // Log warning but don't fail - config loading should still work

|

||||

// fmt.Printf("Warning: oauth-model-alias migration failed: %v\n", err)

|

||||

// } else if migrated {

|

||||

// fmt.Println("Migrated oauth-model-mappings to oauth-model-alias")

|

||||

// }

|

||||

|

||||

// Read the entire configuration file into memory.

|

||||

data, err := os.ReadFile(configFile)

|

||||

if err != nil {

|

||||

@@ -1560,9 +1550,6 @@ func pruneMappingToGeneratedKeys(dstRoot, srcRoot *yaml.Node, key string) {

|

||||

srcIdx := findMapKeyIndex(srcRoot, key)

|

||||

if srcIdx < 0 {

|

||||

// Keep an explicit empty mapping for oauth-model-alias when it was previously present.

|

||||

//

|

||||

// Rationale: LoadConfig runs MigrateOAuthModelAlias before unmarshalling. If the

|

||||

// oauth-model-alias key is missing, migration will add the default antigravity aliases.

|

||||

// When users delete the last channel from oauth-model-alias via the management API,

|

||||

// we want that deletion to persist across hot reloads and restarts.

|

||||

if key == "oauth-model-alias" {

|

||||

|

||||

@@ -1,277 +0,0 @@

|

||||

package config

|

||||

|

||||

import (

|

||||

"os"

|

||||

"strings"

|

||||

|

||||

"gopkg.in/yaml.v3"

|

||||

)

|

||||

|

||||

// antigravityModelConversionTable maps old built-in aliases to actual model names

|

||||

// for the antigravity channel during migration.

|

||||

var antigravityModelConversionTable = map[string]string{

|

||||

"gemini-2.5-computer-use-preview-10-2025": "rev19-uic3-1p",

|

||||

"gemini-3-pro-image-preview": "gemini-3-pro-image",

|

||||

"gemini-3-pro-preview": "gemini-3-pro-high",

|

||||

"gemini-3-flash-preview": "gemini-3-flash",

|

||||

"gemini-claude-sonnet-4-5": "claude-sonnet-4-5",

|

||||

"gemini-claude-sonnet-4-5-thinking": "claude-sonnet-4-5-thinking",

|

||||

"gemini-claude-opus-4-5-thinking": "claude-opus-4-5-thinking",

|

||||

"gemini-claude-opus-4-6-thinking": "claude-opus-4-6-thinking",

|

||||

}

|

||||

|

||||

// defaultAntigravityAliases returns the default oauth-model-alias configuration

|

||||

// for the antigravity channel when neither field exists.

|

||||

func defaultAntigravityAliases() []OAuthModelAlias {

|

||||

return []OAuthModelAlias{

|

||||

{Name: "rev19-uic3-1p", Alias: "gemini-2.5-computer-use-preview-10-2025"},

|

||||

{Name: "gemini-3-pro-image", Alias: "gemini-3-pro-image-preview"},

|

||||

{Name: "gemini-3-pro-high", Alias: "gemini-3-pro-preview"},

|

||||

{Name: "gemini-3-flash", Alias: "gemini-3-flash-preview"},

|

||||

{Name: "claude-sonnet-4-5", Alias: "gemini-claude-sonnet-4-5"},

|

||||

{Name: "claude-sonnet-4-5-thinking", Alias: "gemini-claude-sonnet-4-5-thinking"},

|

||||

{Name: "claude-opus-4-5-thinking", Alias: "gemini-claude-opus-4-5-thinking"},

|

||||

{Name: "claude-opus-4-6-thinking", Alias: "gemini-claude-opus-4-6-thinking"},

|

||||

}

|

||||

}

|

||||

|

||||

// MigrateOAuthModelAlias checks for and performs migration from oauth-model-mappings

|

||||

// to oauth-model-alias at startup. Returns true if migration was performed.

|

||||

//

|

||||

// Migration flow:

|

||||

// 1. Check if oauth-model-alias exists -> skip migration

|

||||

// 2. Check if oauth-model-mappings exists -> convert and migrate

|

||||

// - For antigravity channel, convert old built-in aliases to actual model names

|

||||

//

|

||||

// 3. Neither exists -> add default antigravity config

|

||||

func MigrateOAuthModelAlias(configFile string) (bool, error) {

|

||||

data, err := os.ReadFile(configFile)

|

||||

if err != nil {

|

||||

if os.IsNotExist(err) {

|

||||

return false, nil

|

||||

}

|

||||

return false, err

|

||||

}

|

||||

if len(data) == 0 {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

// Parse YAML into node tree to preserve structure

|

||||

var root yaml.Node

|

||||

if err := yaml.Unmarshal(data, &root); err != nil {

|

||||

return false, nil

|

||||

}

|

||||

if root.Kind != yaml.DocumentNode || len(root.Content) == 0 {

|

||||

return false, nil

|

||||

}

|

||||

rootMap := root.Content[0]

|

||||

if rootMap == nil || rootMap.Kind != yaml.MappingNode {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

// Check if oauth-model-alias already exists

|

||||

if findMapKeyIndex(rootMap, "oauth-model-alias") >= 0 {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

// Check if oauth-model-mappings exists

|

||||

oldIdx := findMapKeyIndex(rootMap, "oauth-model-mappings")

|

||||

if oldIdx >= 0 {

|

||||

// Migrate from old field

|

||||

return migrateFromOldField(configFile, &root, rootMap, oldIdx)

|

||||

}

|

||||

|

||||

// Neither field exists - add default antigravity config

|

||||

return addDefaultAntigravityConfig(configFile, &root, rootMap)

|

||||

}

|

||||

|

||||

// migrateFromOldField converts oauth-model-mappings to oauth-model-alias

|

||||

func migrateFromOldField(configFile string, root *yaml.Node, rootMap *yaml.Node, oldIdx int) (bool, error) {

|

||||

if oldIdx+1 >= len(rootMap.Content) {

|

||||

return false, nil

|

||||

}

|

||||

oldValue := rootMap.Content[oldIdx+1]

|

||||

if oldValue == nil || oldValue.Kind != yaml.MappingNode {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

// Parse the old aliases

|

||||

oldAliases := parseOldAliasNode(oldValue)

|

||||

if len(oldAliases) == 0 {

|

||||

// Remove the old field and write

|

||||

removeMapKeyByIndex(rootMap, oldIdx)

|

||||

return writeYAMLNode(configFile, root)

|

||||

}

|

||||

|

||||

// Convert model names for antigravity channel

|

||||

newAliases := make(map[string][]OAuthModelAlias, len(oldAliases))

|

||||

for channel, entries := range oldAliases {

|

||||

converted := make([]OAuthModelAlias, 0, len(entries))

|

||||

for _, entry := range entries {

|

||||

newEntry := OAuthModelAlias{

|

||||

Name: entry.Name,

|

||||

Alias: entry.Alias,

|

||||

Fork: entry.Fork,

|

||||

}

|

||||

// Convert model names for antigravity channel

|

||||

if strings.EqualFold(channel, "antigravity") {

|

||||

if actual, ok := antigravityModelConversionTable[entry.Name]; ok {

|

||||

newEntry.Name = actual

|

||||

}

|

||||

}

|

||||

converted = append(converted, newEntry)

|

||||

}

|

||||

newAliases[channel] = converted

|

||||

}

|

||||

|

||||

// For antigravity channel, supplement missing default aliases

|

||||

if antigravityEntries, exists := newAliases["antigravity"]; exists {

|

||||

// Build a set of already configured model names (upstream names)

|

||||

configuredModels := make(map[string]bool, len(antigravityEntries))

|

||||

for _, entry := range antigravityEntries {

|

||||

configuredModels[entry.Name] = true

|

||||

}

|

||||

|

||||

// Add missing default aliases

|

||||

for _, defaultAlias := range defaultAntigravityAliases() {

|

||||

if !configuredModels[defaultAlias.Name] {

|

||||

antigravityEntries = append(antigravityEntries, defaultAlias)

|

||||

}

|

||||

}

|

||||

newAliases["antigravity"] = antigravityEntries

|

||||

}

|

||||

|

||||

// Build new node

|

||||

newNode := buildOAuthModelAliasNode(newAliases)

|

||||

|

||||

// Replace old key with new key and value

|

||||

rootMap.Content[oldIdx].Value = "oauth-model-alias"

|

||||

rootMap.Content[oldIdx+1] = newNode

|

||||

|

||||

return writeYAMLNode(configFile, root)

|

||||

}

|

||||

|

||||

// addDefaultAntigravityConfig adds the default antigravity configuration

|

||||

func addDefaultAntigravityConfig(configFile string, root *yaml.Node, rootMap *yaml.Node) (bool, error) {

|

||||

defaults := map[string][]OAuthModelAlias{

|

||||

"antigravity": defaultAntigravityAliases(),

|

||||

}

|

||||

newNode := buildOAuthModelAliasNode(defaults)

|

||||

|

||||

// Add new key-value pair

|

||||

keyNode := &yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: "oauth-model-alias"}

|

||||

rootMap.Content = append(rootMap.Content, keyNode, newNode)

|

||||

|

||||

return writeYAMLNode(configFile, root)

|

||||

}

|

||||

|

||||

// parseOldAliasNode parses the old oauth-model-mappings node structure

|

||||

func parseOldAliasNode(node *yaml.Node) map[string][]OAuthModelAlias {

|

||||

if node == nil || node.Kind != yaml.MappingNode {

|

||||

return nil

|

||||

}

|

||||

result := make(map[string][]OAuthModelAlias)

|

||||

for i := 0; i+1 < len(node.Content); i += 2 {

|

||||

channelNode := node.Content[i]

|

||||

entriesNode := node.Content[i+1]

|

||||

if channelNode == nil || entriesNode == nil {

|

||||

continue

|

||||

}

|

||||

channel := strings.ToLower(strings.TrimSpace(channelNode.Value))

|

||||

if channel == "" || entriesNode.Kind != yaml.SequenceNode {

|

||||

continue

|

||||

}

|

||||

entries := make([]OAuthModelAlias, 0, len(entriesNode.Content))

|

||||

for _, entryNode := range entriesNode.Content {

|

||||

if entryNode == nil || entryNode.Kind != yaml.MappingNode {

|

||||

continue

|

||||

}

|

||||

entry := parseAliasEntry(entryNode)

|

||||

if entry.Name != "" && entry.Alias != "" {

|

||||

entries = append(entries, entry)

|

||||

}

|

||||

}

|

||||

if len(entries) > 0 {

|

||||

result[channel] = entries

|

||||

}

|

||||

}

|

||||

return result

|

||||

}

|

||||

|

||||

// parseAliasEntry parses a single alias entry node

|

||||

func parseAliasEntry(node *yaml.Node) OAuthModelAlias {

|

||||

var entry OAuthModelAlias

|

||||

for i := 0; i+1 < len(node.Content); i += 2 {

|

||||

keyNode := node.Content[i]

|

||||

valNode := node.Content[i+1]

|

||||

if keyNode == nil || valNode == nil {

|

||||

continue

|

||||

}

|

||||

switch strings.ToLower(strings.TrimSpace(keyNode.Value)) {

|

||||

case "name":

|

||||

entry.Name = strings.TrimSpace(valNode.Value)

|

||||

case "alias":

|

||||

entry.Alias = strings.TrimSpace(valNode.Value)

|

||||

case "fork":

|

||||

entry.Fork = strings.ToLower(strings.TrimSpace(valNode.Value)) == "true"

|

||||

}

|

||||

}

|

||||

return entry

|

||||

}

|

||||

|

||||

// buildOAuthModelAliasNode creates a YAML node for oauth-model-alias

|

||||

func buildOAuthModelAliasNode(aliases map[string][]OAuthModelAlias) *yaml.Node {

|

||||

node := &yaml.Node{Kind: yaml.MappingNode, Tag: "!!map"}

|

||||

for channel, entries := range aliases {

|

||||

channelNode := &yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: channel}

|

||||

entriesNode := &yaml.Node{Kind: yaml.SequenceNode, Tag: "!!seq"}

|

||||

for _, entry := range entries {

|

||||

entryNode := &yaml.Node{Kind: yaml.MappingNode, Tag: "!!map"}

|

||||

entryNode.Content = append(entryNode.Content,

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: "name"},

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: entry.Name},

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: "alias"},

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: entry.Alias},

|

||||

)

|

||||

if entry.Fork {

|

||||

entryNode.Content = append(entryNode.Content,

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: "fork"},

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!bool", Value: "true"},

|

||||

)

|

||||

}

|

||||

entriesNode.Content = append(entriesNode.Content, entryNode)

|

||||

}

|

||||

node.Content = append(node.Content, channelNode, entriesNode)

|

||||

}

|

||||

return node

|

||||

}

|

||||

|

||||

// removeMapKeyByIndex removes a key-value pair from a mapping node by index

|

||||

func removeMapKeyByIndex(mapNode *yaml.Node, keyIdx int) {

|

||||

if mapNode == nil || mapNode.Kind != yaml.MappingNode {

|

||||

return

|

||||

}

|

||||

if keyIdx < 0 || keyIdx+1 >= len(mapNode.Content) {

|

||||

return

|

||||

}

|

||||

mapNode.Content = append(mapNode.Content[:keyIdx], mapNode.Content[keyIdx+2:]...)

|

||||

}

|

||||

|

||||

// writeYAMLNode writes the YAML node tree back to file

|

||||

func writeYAMLNode(configFile string, root *yaml.Node) (bool, error) {

|

||||

f, err := os.Create(configFile)

|

||||

if err != nil {

|

||||

return false, err

|

||||

}

|

||||

defer f.Close()

|

||||

|

||||

enc := yaml.NewEncoder(f)

|

||||

enc.SetIndent(2)

|

||||

if err := enc.Encode(root); err != nil {

|

||||

return false, err

|

||||

}

|

||||

if err := enc.Close(); err != nil {

|

||||

return false, err

|

||||

}

|

||||

return true, nil

|

||||

}

|

||||

@@ -1,245 +0,0 @@

|

||||

package config

|

||||

|

||||

import (

|

||||

"os"

|

||||

"path/filepath"

|

||||

"strings"

|

||||

"testing"

|

||||

|

||||

"gopkg.in/yaml.v3"

|

||||

)

|

||||

|

||||

func TestMigrateOAuthModelAlias_SkipsIfNewFieldExists(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

content := `oauth-model-alias:

|

||||

gemini-cli:

|

||||

- name: "gemini-2.5-pro"

|

||||

alias: "g2.5p"

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if migrated {

|

||||

t.Fatal("expected no migration when oauth-model-alias already exists")

|

||||

}

|

||||

|

||||

// Verify file unchanged

|

||||

data, _ := os.ReadFile(configFile)

|

||||

if !strings.Contains(string(data), "oauth-model-alias:") {

|

||||

t.Fatal("file should still contain oauth-model-alias")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_MigratesOldField(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

content := `oauth-model-mappings:

|

||||

gemini-cli:

|

||||

- name: "gemini-2.5-pro"

|

||||

alias: "g2.5p"

|

||||

fork: true

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if !migrated {

|

||||

t.Fatal("expected migration to occur")

|

||||

}

|

||||

|

||||

// Verify new field exists and old field removed

|

||||

data, _ := os.ReadFile(configFile)

|

||||

if strings.Contains(string(data), "oauth-model-mappings:") {

|

||||

t.Fatal("old field should be removed")

|

||||

}

|

||||

if !strings.Contains(string(data), "oauth-model-alias:") {

|

||||

t.Fatal("new field should exist")

|

||||

}

|

||||

|

||||

// Parse and verify structure

|

||||

var root yaml.Node

|

||||

if err := yaml.Unmarshal(data, &root); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_ConvertsAntigravityModels(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

// Use old model names that should be converted

|

||||

content := `oauth-model-mappings:

|

||||

antigravity:

|

||||

- name: "gemini-2.5-computer-use-preview-10-2025"

|

||||

alias: "computer-use"

|

||||

- name: "gemini-3-pro-preview"

|

||||

alias: "g3p"

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if !migrated {

|

||||

t.Fatal("expected migration to occur")

|

||||

}

|

||||

|

||||

// Verify model names were converted

|

||||

data, _ := os.ReadFile(configFile)

|

||||

content = string(data)

|

||||

if !strings.Contains(content, "rev19-uic3-1p") {

|

||||

t.Fatal("expected gemini-2.5-computer-use-preview-10-2025 to be converted to rev19-uic3-1p")

|

||||

}

|

||||

if !strings.Contains(content, "gemini-3-pro-high") {

|

||||

t.Fatal("expected gemini-3-pro-preview to be converted to gemini-3-pro-high")

|

||||

}

|

||||

|

||||

// Verify missing default aliases were supplemented

|

||||

if !strings.Contains(content, "gemini-3-pro-image") {

|

||||

t.Fatal("expected missing default alias gemini-3-pro-image to be added")

|

||||

}

|

||||

if !strings.Contains(content, "gemini-3-flash") {

|

||||

t.Fatal("expected missing default alias gemini-3-flash to be added")

|

||||

}

|

||||

if !strings.Contains(content, "claude-sonnet-4-5") {

|

||||

t.Fatal("expected missing default alias claude-sonnet-4-5 to be added")

|

||||

}

|

||||

if !strings.Contains(content, "claude-sonnet-4-5-thinking") {

|

||||

t.Fatal("expected missing default alias claude-sonnet-4-5-thinking to be added")

|

||||

}

|

||||

if !strings.Contains(content, "claude-opus-4-5-thinking") {

|

||||

t.Fatal("expected missing default alias claude-opus-4-5-thinking to be added")

|

||||

}

|

||||

if !strings.Contains(content, "claude-opus-4-6-thinking") {

|

||||

t.Fatal("expected missing default alias claude-opus-4-6-thinking to be added")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_AddsDefaultIfNeitherExists(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

content := `debug: true

|

||||

port: 8080

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if !migrated {

|

||||

t.Fatal("expected migration to add default config")

|

||||

}

|

||||

|

||||

// Verify default antigravity config was added

|

||||

data, _ := os.ReadFile(configFile)

|

||||

content = string(data)

|

||||

if !strings.Contains(content, "oauth-model-alias:") {

|

||||

t.Fatal("expected oauth-model-alias to be added")

|

||||

}

|

||||

if !strings.Contains(content, "antigravity:") {

|

||||

t.Fatal("expected antigravity channel to be added")

|

||||

}

|

||||

if !strings.Contains(content, "rev19-uic3-1p") {

|

||||

t.Fatal("expected default antigravity aliases to include rev19-uic3-1p")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_PreservesOtherConfig(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

content := `debug: true

|

||||

port: 8080

|

||||

oauth-model-mappings:

|

||||

gemini-cli:

|

||||

- name: "test"

|

||||

alias: "t"

|

||||

api-keys:

|

||||

- "key1"

|

||||

- "key2"

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if !migrated {

|

||||

t.Fatal("expected migration to occur")

|

||||

}

|

||||

|

||||

// Verify other config preserved

|

||||

data, _ := os.ReadFile(configFile)

|

||||

content = string(data)

|

||||

if !strings.Contains(content, "debug: true") {

|

||||

t.Fatal("expected debug field to be preserved")

|

||||

}

|

||||

if !strings.Contains(content, "port: 8080") {

|

||||

t.Fatal("expected port field to be preserved")

|

||||

}

|

||||

if !strings.Contains(content, "api-keys:") {

|

||||

t.Fatal("expected api-keys field to be preserved")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_NonexistentFile(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias("/nonexistent/path/config.yaml")

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error for nonexistent file: %v", err)

|

||||

}

|

||||

if migrated {

|

||||

t.Fatal("expected no migration for nonexistent file")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_EmptyFile(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

if err := os.WriteFile(configFile, []byte(""), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if migrated {

|

||||

t.Fatal("expected no migration for empty file")

|

||||

}

|

||||

}

|

||||

@@ -34,6 +34,9 @@ type VertexCompatKey struct {

|

||||

|

||||

// Models defines the model configurations including aliases for routing.

|

||||

Models []VertexCompatModel `yaml:"models,omitempty" json:"models,omitempty"`

|

||||

|

||||

// ExcludedModels lists model IDs that should be excluded for this provider.

|

||||

ExcludedModels []string `yaml:"excluded-models,omitempty" json:"excluded-models,omitempty"`

|

||||

}

|

||||

|

||||

func (k VertexCompatKey) GetAPIKey() string { return k.APIKey }

|

||||

@@ -74,6 +77,7 @@ func (cfg *Config) SanitizeVertexCompatKeys() {

|

||||

}

|

||||

entry.ProxyURL = strings.TrimSpace(entry.ProxyURL)

|

||||

entry.Headers = NormalizeHeaders(entry.Headers)

|

||||

entry.ExcludedModels = NormalizeExcludedModels(entry.ExcludedModels)

|

||||

|

||||

// Sanitize models: remove entries without valid alias

|

||||

sanitizedModels := make([]VertexCompatModel, 0, len(entry.Models))

|

||||

|

||||

@@ -4,10 +4,98 @@

|

||||

package misc

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"net/http"

|

||||

"runtime"

|

||||

"strings"

|

||||

)

|

||||

|

||||

const (

|

||||

// GeminiCLIVersion is the version string reported in the User-Agent for upstream requests.

|

||||

GeminiCLIVersion = "0.31.0"

|

||||

|

||||

// GeminiCLIApiClientHeader is the value for the X-Goog-Api-Client header sent to the Gemini CLI upstream.

|

||||

GeminiCLIApiClientHeader = "google-genai-sdk/1.41.0 gl-node/v22.19.0"

|

||||

)

|

||||

|

||||

// geminiCLIOS maps Go runtime OS names to the Node.js-style platform strings used by Gemini CLI.

|

||||

func geminiCLIOS() string {

|

||||

switch runtime.GOOS {

|

||||

case "windows":

|

||||

return "win32"

|

||||

default:

|

||||

return runtime.GOOS

|

||||

}

|

||||

}

|

||||

|

||||

// geminiCLIArch maps Go runtime architecture names to the Node.js-style arch strings used by Gemini CLI.

|

||||

func geminiCLIArch() string {

|

||||

switch runtime.GOARCH {

|

||||

case "amd64":

|

||||

return "x64"

|

||||

case "386":

|

||||

return "x86"

|

||||

default:

|

||||

return runtime.GOARCH

|

||||

}

|

||||

}

|

||||

|

||||

// GeminiCLIUserAgent returns a User-Agent string that matches the Gemini CLI format.

|

||||

// The model parameter is included in the UA; pass "" or "unknown" when the model is not applicable.

|

||||

func GeminiCLIUserAgent(model string) string {

|

||||

if model == "" {

|

||||

model = "unknown"

|

||||

}

|

||||

return fmt.Sprintf("GeminiCLI/%s/%s (%s; %s)", GeminiCLIVersion, model, geminiCLIOS(), geminiCLIArch())

|

||||

}

|

||||

|

||||

// ScrubProxyAndFingerprintHeaders removes all headers that could reveal

|

||||

// proxy infrastructure, client identity, or browser fingerprints from an

|

||||

// outgoing request. This ensures requests to upstream services look like they

|

||||

// originate directly from a native client rather than a third-party client

|

||||

// behind a reverse proxy.

|

||||

func ScrubProxyAndFingerprintHeaders(req *http.Request) {

|

||||

if req == nil {

|

||||

return

|

||||

}

|

||||

|

||||

// --- Proxy tracing headers ---

|

||||

req.Header.Del("X-Forwarded-For")

|

||||

req.Header.Del("X-Forwarded-Host")

|

||||

req.Header.Del("X-Forwarded-Proto")

|

||||

req.Header.Del("X-Forwarded-Port")

|

||||

req.Header.Del("X-Real-IP")

|

||||

req.Header.Del("Forwarded")

|

||||

req.Header.Del("Via")

|

||||

|

||||

// --- Client identity headers ---

|

||||

req.Header.Del("X-Title")

|

||||

req.Header.Del("X-Stainless-Lang")

|

||||

req.Header.Del("X-Stainless-Package-Version")

|

||||

req.Header.Del("X-Stainless-Os")

|

||||

req.Header.Del("X-Stainless-Arch")

|

||||

req.Header.Del("X-Stainless-Runtime")

|

||||

req.Header.Del("X-Stainless-Runtime-Version")

|

||||

req.Header.Del("Http-Referer")

|

||||

req.Header.Del("Referer")

|

||||

|

||||

// --- Browser / Chromium fingerprint headers ---

|

||||

// These are sent by Electron-based clients (e.g. CherryStudio) using the

|

||||

// Fetch API, but NOT by Node.js https module (which Antigravity uses).

|

||||

req.Header.Del("Sec-Ch-Ua")

|

||||

req.Header.Del("Sec-Ch-Ua-Mobile")

|

||||

req.Header.Del("Sec-Ch-Ua-Platform")

|

||||

req.Header.Del("Sec-Fetch-Mode")

|

||||

req.Header.Del("Sec-Fetch-Site")

|

||||

req.Header.Del("Sec-Fetch-Dest")

|

||||

req.Header.Del("Priority")

|

||||

|

||||

// --- Encoding negotiation ---

|

||||

// Antigravity (Node.js) sends "gzip, deflate, br" by default;

|

||||

// Electron-based clients may add "zstd" which is a fingerprint mismatch.

|

||||

req.Header.Del("Accept-Encoding")

|

||||

}

|

||||

|

||||

// EnsureHeader ensures that a header exists in the target header map by checking

|

||||

// multiple sources in order of priority: source headers, existing target headers,

|

||||

// and finally the default value. It only sets the header if it's not already present

|

||||

|

||||

@@ -37,7 +37,7 @@ func GetClaudeModels() []*ModelInfo {

|

||||

DisplayName: "Claude 4.6 Sonnet",

|

||||

ContextLength: 200000,

|

||||

MaxCompletionTokens: 64000,

|

||||

Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: false},

|

||||

Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: false, Levels: []string{"low", "medium", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "claude-opus-4-6",

|

||||

@@ -49,7 +49,7 @@ func GetClaudeModels() []*ModelInfo {

|

||||

Description: "Premium model combining maximum intelligence with practical performance",

|

||||

ContextLength: 1000000,

|

||||

MaxCompletionTokens: 128000,

|

||||

Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: false},

|

||||

Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: false, Levels: []string{"low", "medium", "high", "max"}},

|

||||

},

|

||||

{

|

||||

ID: "claude-opus-4-5-20251101",

|

||||

@@ -208,12 +208,27 @@ func GetGeminiModels() []*ModelInfo {

|

||||

Name: "models/gemini-3-flash-preview",

|

||||

Version: "3.0",

|

||||

DisplayName: "Gemini 3 Flash Preview",

|

||||

Description: "Gemini 3 Flash Preview",

|

||||

Description: "Our most intelligent model built for speed, combining frontier intelligence with superior search and grounding.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3-pro-image-preview",

|

||||

Object: "model",

|

||||

@@ -324,6 +339,21 @@ func GetGeminiVertexModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3-pro-image-preview",

|

||||

Object: "model",

|

||||

@@ -496,6 +526,21 @@ func GetGeminiCLIModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

}

|

||||

}

|

||||

|

||||

@@ -592,6 +637,21 @@ func GetAIStudioModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-pro-latest",

|

||||

Object: "model",

|

||||

@@ -827,6 +887,20 @@ func GetOpenAIModels() []*ModelInfo {

|

||||

SupportedParameters: []string{"tools"},

|

||||

Thinking: &ThinkingSupport{Levels: []string{"low", "medium", "high", "xhigh"}},

|

||||

},

|

||||

{

|

||||

ID: "gpt-5.4",

|

||||

Object: "model",

|

||||

Created: 1772668800,

|

||||

OwnedBy: "openai",

|

||||

Type: "openai",

|

||||

Version: "gpt-5.4",

|

||||

DisplayName: "GPT 5.4",

|

||||

Description: "Stable version of GPT 5.4",

|

||||

ContextLength: 1_050_000,

|

||||

MaxCompletionTokens: 128000,

|

||||

SupportedParameters: []string{"tools"},

|

||||

Thinking: &ThinkingSupport{Levels: []string{"low", "medium", "high", "xhigh"}},

|

||||

},

|

||||

}

|

||||

}

|

||||

|

||||

@@ -947,18 +1021,18 @@ type AntigravityModelConfig struct {

|

||||

// Keys use upstream model names returned by the Antigravity models endpoint.

|

||||

func GetAntigravityModelConfig() map[string]*AntigravityModelConfig {

|

||||

return map[string]*AntigravityModelConfig{

|

||||

// "rev19-uic3-1p": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true}},

|

||||

"gemini-2.5-flash": {Thinking: &ThinkingSupport{Min: 0, Max: 24576, ZeroAllowed: true, DynamicAllowed: true}},

|

||||

"gemini-2.5-flash-lite": {Thinking: &ThinkingSupport{Min: 0, Max: 24576, ZeroAllowed: true, DynamicAllowed: true}},

|

||||

"gemini-3-pro-high": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3-pro-image": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3-pro-low": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-pro-high": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-pro-low": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-flash-image": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}}},

|

||||

"gemini-3.1-flash-lite-preview": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}}},

|

||||

"gemini-3-flash": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}}},

|

||||

"claude-opus-4-6-thinking": {Thinking: &ThinkingSupport{Min: 1024, Max: 64000, ZeroAllowed: true, DynamicAllowed: true}},

|

||||

"claude-sonnet-4-6": {Thinking: &ThinkingSupport{Min: 1024, Max: 64000, ZeroAllowed: true, DynamicAllowed: true}},

|